Graphics Processing Units (GPUs) are specialised processors designed for high-speed parallel computing, making them essential for graphics rendering, artificial intelligence, and large-scale data processing. Their architecture, which includes thousands of cores, high-bandwidth memory (VRAM), and programmable shaders, enables faster execution of repetitive numerical tasks compared to CPUs. In India, GPUs are becoming critical digital infrastructure, supporting AI innovation, scientific research under the National Supercomputing Mission, the startup ecosystem, and large-scale digital governance platforms. With rising demand and heavy import dependence, strengthening domestic semiconductor capabilities and expanding energy-efficient data centre infrastructure are key to ensuring technological self-reliance and global competitiveness.

Copyright infringement not intended

Picture Courtesy: The Hindu

Context:

After the discovery of GPU chips by Nvidia in 1999 named GeForce 256 for video games graphics, it became a core infrastructure for the digital economy.

|

Must Read: GPU | CENTRAL PROCESSING UNIT (CPU), GRAPHICS PROCESSING UNIT (GPU) | |

Graphics Processing Unit (GPU):

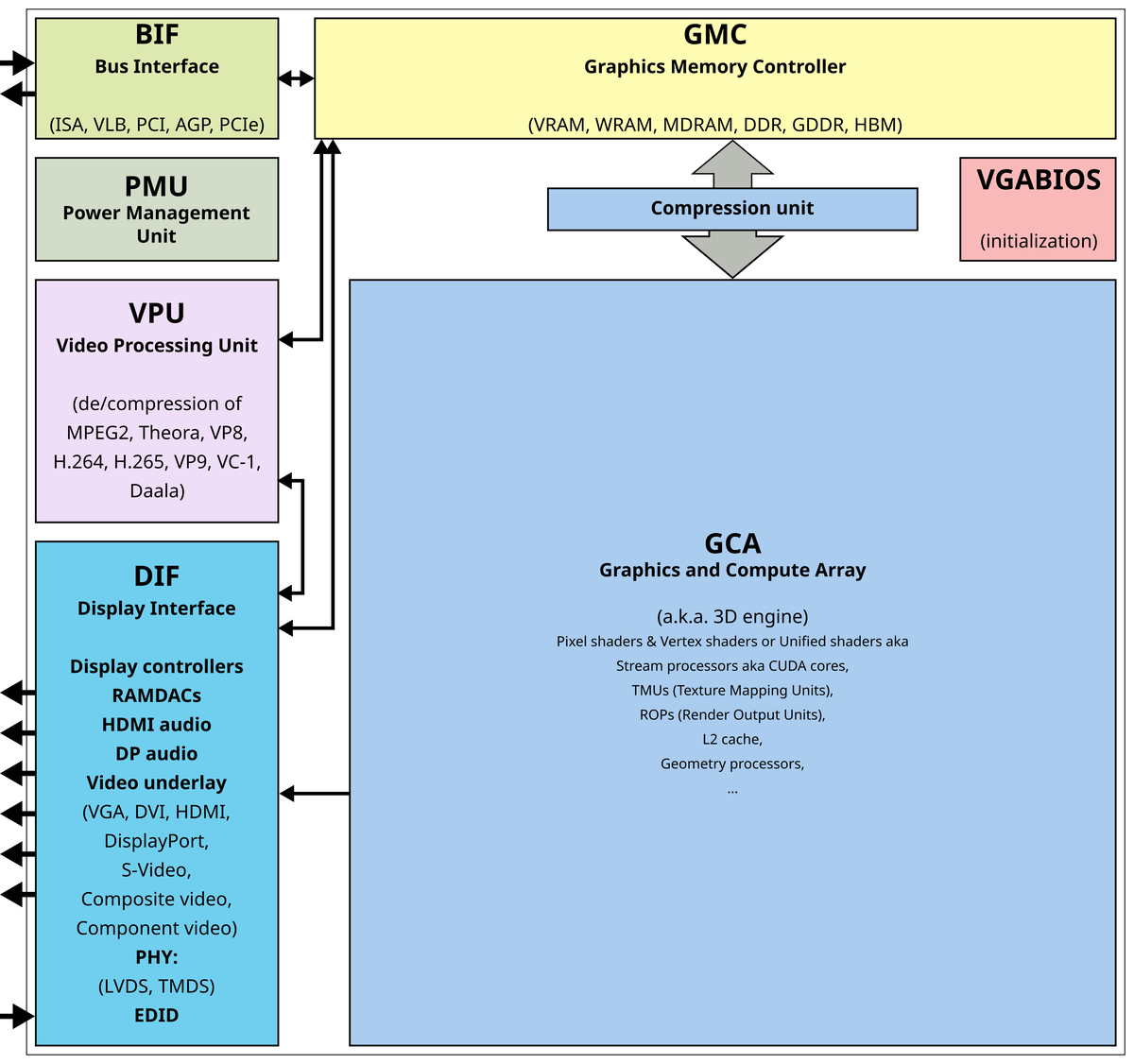

A Graphics Processing Unit (GPU) is a specialised electronic processor designed to perform a very large number of simple mathematical calculations simultaneously. Unlike a Central Processing Unit (CPU), which is optimised to handle complex tasks and manage system operations, a GPU is built for parallel processing, where the same operation must be repeated across large datasets. Initially developed to render images, videos, and animations for computer graphics, GPUs have become essential for modern workloads such as artificial intelligence, machine learning, scientific simulations, data analytics, and high-performance computing.

Working of a GPU:

The functioning of a GPU is based on a rendering pipeline, which converts 3D scene information into the final image displayed on a screen. This process takes place in the following stages:

Vertex processing

Rasterisation

Fragment (Pixel) shading

Frame buffer output

GPU Location:

A GPU can be located either as a discrete component or as an integrated unit within a system. A discrete GPU is a separate graphics card that plugs into the motherboard through a high-speed interface such as PCIe and includes its own VRAM and cooling system; this configuration is commonly used in gaming computers, workstations, and AI servers. An integrated GPU, on the other hand, is built into the same chip as the CPU and shares the system’s main memory. Integrated GPUs are widely used in laptops, smartphones, and other compact devices where power efficiency and space saving are important.

Source: The Hindu

Comparison between GPU and CPU:

|

Basis of Comparison |

CPU (Central Processing Unit) |

GPU (Graphics Processing Unit) |

|

Purpose |

The CPU is designed as a general-purpose processor that manages system operations, runs applications, and performs complex logical and control tasks. |

The GPU is designed as a specialised processor that accelerates tasks requiring large-scale numerical computation and parallel data processing. |

|

Core Architecture |

A CPU contains a small number of powerful and sophisticated cores optimised for sequential processing and decision-making. |

A GPU contains hundreds to thousands of smaller cores designed to perform the same instruction across many data elements simultaneously. |

|

Processing Style |

The CPU executes tasks sequentially or with limited parallelism, making it suitable for workflows that require frequent branching and task switching. |

The GPU follows a massively parallel processing model, making it ideal for repetitive and data-intensive workloads. |

|

Control Logic |

A significant portion of CPU hardware is devoted to complex control mechanisms, instruction management, and task scheduling. |

GPUs devote less space to control logic and more to repeated compute units and wide data paths to maximise throughput. |

|

Memory System |

CPUs rely on large cache hierarchies and moderate memory bandwidth to reduce latency for diverse tasks. |

GPUs use high-bandwidth memory systems such as VRAM or HBM to move large volumes of data quickly during parallel computation. |

|

Task Switching |

CPUs are highly efficient at multitasking and quickly switching between different types of operations. |

GPUs are less efficient at frequent task switching but highly efficient when running the same operation continuously on large datasets. |

|

Best-Suited Applications |

CPUs are best suited for operating systems, databases, office applications, web browsing, and general-purpose computing. |

GPUs are best suited for graphics rendering, artificial intelligence, machine learning, scientific simulations, video processing, and high-performance computing. |

|

Performance Focus |

The CPU is optimised for low-latency performance and fast execution of complex instructions. |

The GPU is optimised for high throughput, enabling the processing of millions of operations in parallel. |

|

Energy Utilisation |

CPUs typically consume less power for general workloads and are designed for efficiency across varied tasks. |

GPUs consume more power during intensive workloads but provide significantly higher performance for parallel computations. |

|

Role in Modern Computing |

The CPU acts as the primary control unit or “brain” of the computer, coordinating all system functions. |

The GPU functions as a computational accelerator or “workhorse,” especially critical for AI, big data, and advanced graphics. |

Importance of GPUs for India:

Conclusion:

Graphics Processing Units (GPUs) have become strategic digital infrastructure for India, enabling advancements in artificial intelligence, scientific research, digital governance, and the innovation ecosystem. Expanding access to high-performance computing, strengthening domestic semiconductor capabilities, and promoting energy-efficient data centres will be essential for achieving technological self-reliance, global competitiveness, and sustained digital economic growth.

Source: The Hindu

|

Practice Question Q. Graphics Processing Units (GPUs) are emerging as critical infrastructure in the age of Artificial Intelligence. Discuss. (150 words) |

A Graphics Processing Unit (GPU) is a specialised processor designed to perform many calculations simultaneously. It is important because it accelerates graphics rendering, artificial intelligence, scientific simulations, and other data-intensive tasks that require high-speed parallel processing.

AI and machine learning models involve repeated mathematical operations such as matrix and tensor calculations. GPUs can perform these operations in parallel and move large volumes of data quickly, significantly reducing training and processing time.

GPUs can be present as a separate graphics card (discrete GPU) connected to the motherboard or integrated within the same chip as the CPU, especially in laptops, smartphones, and System-on-Chip (SoC) devices.

© 2026 iasgyan. All right reserved