Description

Disclaimer: Copyright infringement not intended.

Context

- As the world rushes to make use of the latest wave of AI technologies, one piece of high-tech hardware has become a surprisingly hot commodity: the graphics processing unit, or GPU.

- A top-of-the-line GPU can sell for tens of thousands of dollars, and leading manufacturer NVIDIA has seen its market valuation soar past US$2 trillion as demand for its products surges.

Details

Background

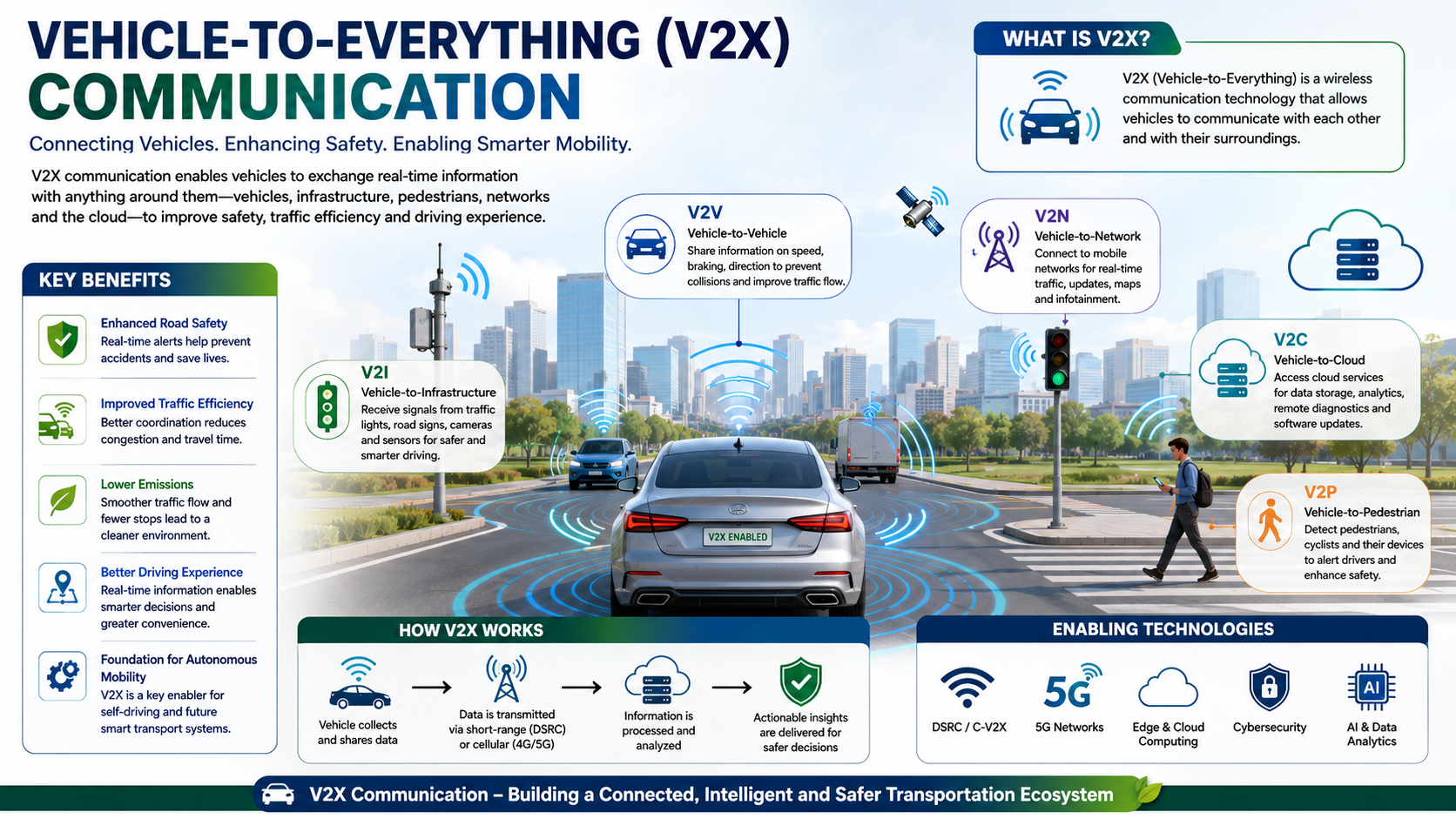

- GPUs were originally designed primarily to quickly generate and display complex 3D scenes and objects, such as those involved in video games and computer-aided design software.

- Modern GPUs also handle tasks such as decompressing video streams.

- GPUs aren’t just high-end AI products, either. There are less powerful GPUs in phones, laptops, and gaming consoles, too.

Difference Between GPUs and CPUs

- A typical modern CPU is made up of between 8 and 16 “cores”, each of which can process complex tasks in a sequential manner.

- GPUs have thousands of relatively small cores, which are designed to all work at the same time (“in parallel”) to achieve fast overall processing.

- This makes them well suited for tasks that require a large number of simple operations which can be done at the same time, rather than one after another.

Traditional GPU Flavours

- Traditional GPUs come in two main flavours.

- First, there are standalone chips, which often come in add-on cards for large desktop computers.

- Second are GPUs combined with a CPU in the same chip package, which are often found in laptops and game consoles such as the PlayStation 5.

- In both cases, the CPU controls what the GPU does.

What’s next for GPUs?

- Continued Advancements in Number-Crunching Prowess:

- The number-crunching prowess of GPUs is steadily increasing, driven by the rise in the number of cores and their operating speeds.

- These improvements are primarily propelled by advancements in chip manufacturing, with companies like TSMC in Taiwan leading the way.

- Decreasing transistor sizes enable more transistors to be packed into the same physical space, enhancing computational power.

- Evolution Beyond Traditional GPU Designs:

- While traditional GPUs excel at AI-related computation tasks, they are not always optimal.

- Accelerators specifically designed for machine learning tasks, known as “data centre GPUs,” are gaining prominence.

- These accelerators, such as those from AMD and NVIDIA, have evolved from traditional GPUs to better handle various machine learning tasks, including support for efficient number formats like "brain float".

- Other accelerators like Google’s Tensor Processing Units and Tenstorrent’s Tensix Cores are purpose-built from the ground up to accelerate deep neural networks.

- Features of Data Centre GPUs and AI Accelerators:

- Data centre GPUs and AI accelerators typically come with significantly more memory than traditional GPU add-on cards, essential for training large AI models.

- Larger AI models require more memory, contributing to improved capabilities and accuracy.

- To enhance training speed and handle even larger AI models, data centre GPUs can be pooled together to form supercomputers. This requires sophisticated software to effectively utilize the available computational power.

- Alternatively, large-scale accelerators like the “wafer-scale processor” produced by Cerebras offer another approach to handling complex AI tasks efficiently.

What is a GPU?

- Graphics Processing Units (GPUs) are specialized electronic circuits designed to rapidly manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device.

Architecture and Components:

- Streaming Multiprocessors (SMs): The fundamental building block of a GPU, SMs consist of multiple CUDA cores (or shader cores) responsible for executing instructions in parallel.

- CUDA Cores: These are the individual processing units within an SM, capable of executing multiple operations simultaneously.

- Memory Subsystem: GPUs have various types of memory, including:

- Graphics Memory (VRAM): High-speed memory dedicated to storing textures, geometry, and other graphics data.

- Registers and Caches: On-chip memory used for storing data and instructions closer to the processing units, reducing latency.

- Interconnects: These are the pathways that allow communication between different components within the GPU, ensuring efficient data exchange.

GPU Programming Models:

- CUDA (Compute Unified Device Architecture): Developed by NVIDIA, CUDA is a parallel computing platform and programming model that enables developers to harness the power of NVIDIA GPUs for general-purpose processing.

- OpenCL (Open Computing Language): A cross-platform framework for programming heterogeneous systems, OpenCL allows developers to write programs that execute across CPUs, GPUs, and other accelerators.

- DirectX and Vulkan: Graphics APIs that provide low-level access to GPU resources, primarily used for game development but can also be leveraged for general-purpose computing tasks.

Applications of GPUs:

- Graphics Rendering: GPUs are widely used in video games, computer-aided design (CAD), and animation to generate realistic images and visual effects.

- Deep Learning and AI: GPUs excel at performing matrix operations, making them essential for training and deploying deep neural networks in applications such as image recognition, natural language processing, and autonomous driving.

- Scientific Computing: GPUs accelerate scientific simulations and computations, enabling researchers to solve complex problems in fields like physics, chemistry, and climate modeling.

- Cryptocurrency Mining: Due to their high computational power, GPUs are commonly used for mining cryptocurrencies like Bitcoin and Ethereum, where complex cryptographic puzzles need to be solved.

Recent Advancements:

- Ray Tracing: NVIDIA's RTX series introduced real-time ray tracing capabilities, enabling more realistic lighting, reflections, and shadows in video games and other applications.

- Tensor Cores: NVIDIA's Volta and Turing architectures feature dedicated hardware for accelerating tensor operations, essential for deep learning workloads.

- Heterogeneous Computing: Modern GPUs are increasingly being integrated with other types of processors, such as CPUs and FPGAs, to create heterogeneous computing platforms optimized for specific workloads.

Challenges and Future Trends:

- Power Efficiency: As GPUs become more powerful, managing their power consumption and thermal output becomes increasingly challenging.

- Memory Bandwidth: With the growing demand for higher resolution textures and more complex models, ensuring sufficient memory bandwidth remains a critical concern.

- AI and Machine Learning: The integration of specialized hardware for AI and machine learning tasks within GPUs is expected to continue, further blurring the lines between traditional graphics processing and general-purpose computing.

|

PRACTICE QUESTION

Q.Graphics Processing Units have undergone a remarkable evolution from specialized graphics accelerators to versatile processors powering a wide range of applications across industries. Discuss. (250 Words)

|