Description

Disclaimer: Copyright infringement not intended.

Context:

Recently, AI researchers found that AI chatbots are not so strong against indirect prompt injection attacks.

What is Indirect Prompt Injection?

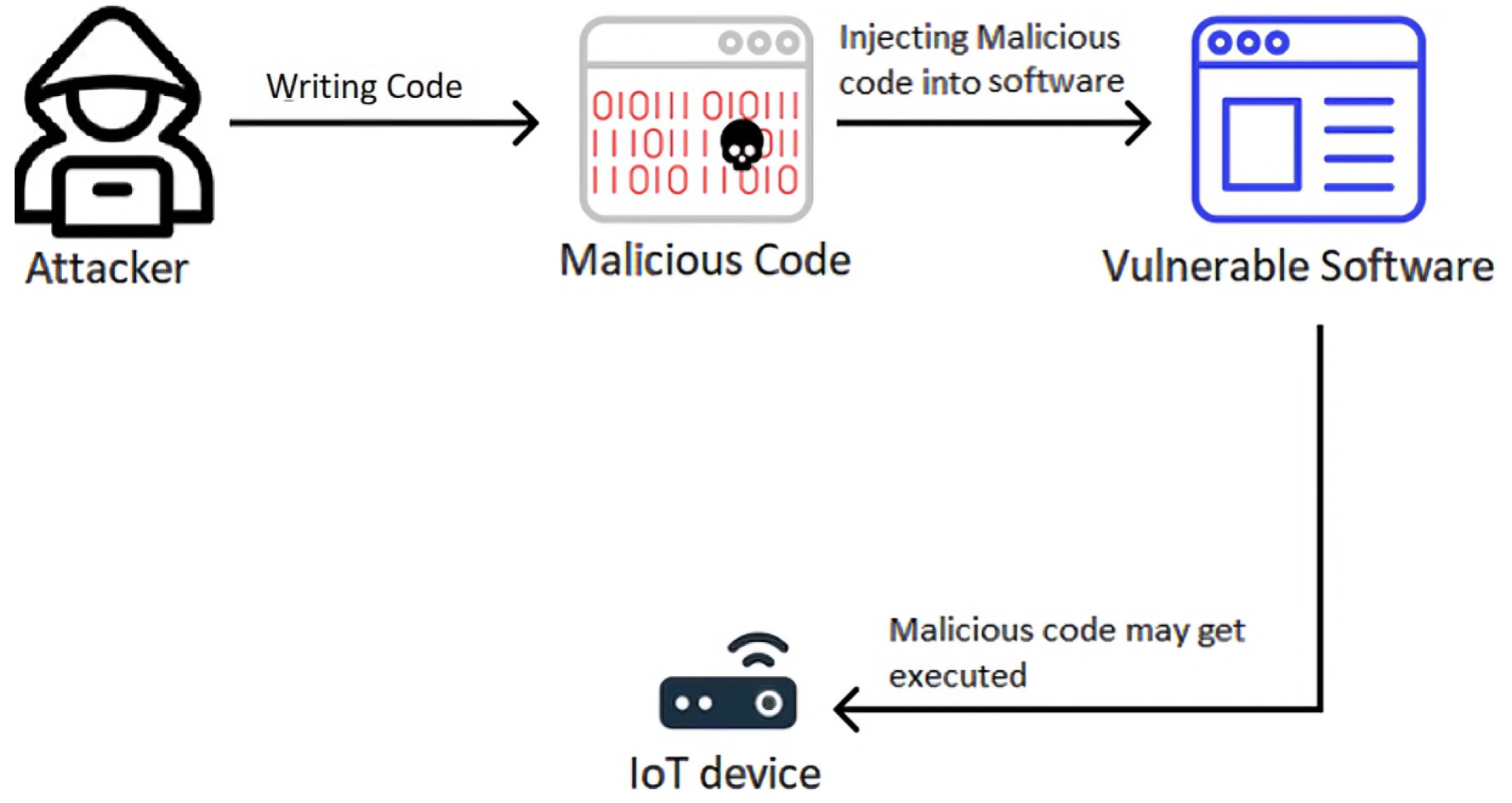

- Indirect prompt injection is a cybersecurity attack in which malicious commands are hidden within content

- This content can include text, images, or documents. This malicious commands hidden content causes the chatbot to perform wrongful actions.

How does it work?

- In direct prompt injection, AI Bots are given commands directly, but indirect injection involves hidden commands in sources itself that the AI later reads or interprets.

- This source can be external documents, web pages, or other media which the AI is asked to analyse.

- Large language models (LLMs) are more vulnerable because they are programmed to follow instructions coded in the text they process.

- Examples:

- Cybersecurity researcher Johann Rehberger showed how Google's Gemini chatbots were manipulated by commands hidden in YouTube transcripts.

- AI bots can be manipulated and used to spread misinformation or execute harmful commands such as downloading malware.

- Data breach: If it is integrated with sensitive systems, these attacks can lead to unauthorized access to private data

Way forward

- Tech companies (Google & OpenAI) are working on their systems to make it strong. But there is no foolproof solution to indirect prompt injection.

- There is a focus on developing better training for AI to differentiate between user input & hidden commands, but this remains challenging.

Source:

The hindu

|

Practice Question

Q. With reference to indirect prompt injection attacks on AI chatbots, consider the following statements:

- Indirect prompt injection attacks happen through embedding malicious commands in content such as text, images, or documents.

- In indirect prompt injection, AI bots are directly given commands by the attackers to manipulate behavior.

- Large language models (LLMs) are more vulnerable to these attacks because they follow instructions coded in the text they process.

Which of the statements is/are correct?

(a) 1 and 2 only

(b) 1 and 3 only

(c) 2 and 3 only

(d) 1, 2 and 3

Answer: (b)

Explanation:

● Statement 1 is correct: Indirect prompt injection covers embedding malicious commands in various types of content.

● Statement 2 is incorrect: In indirect injection, the commands are hidden within content, not directly given by the user.

● Statement 3 is correct: LLMs are vulnerable because they are programmed to follow instructions in the text they process.

|