Description

Disclaimer: Copyright infringement not intended.

Context

- Leading AI companies, such as OpenAI, are actively working on enhancing multimodal capabilities.

- OpenAI, for example, added image analysis and speech synthesis to its GPT-3.5 and GPT-4 models.

- Google's multimodal large language model called Gemini is also being tested, thanks to its vast repository of images and videos from its search engine and YouTube.

Details

Introduction to Multimodal Artificial Intelligence

What is Multimodal AI?

- Multimodal Artificial Intelligence (MMAI) is an advanced subfield of artificial intelligence that integrates and processes data from various modalities, such as text, images, videos, audio, and sensor inputs.

- The primary goal of MMAI is to enable machines to understand, interpret, and interact with the world in a more human-like and holistic manner.

- Unlike traditional AI systems that focus on a single data type, multimodal AI leverages the combined power of multiple data sources to make more informed decisions.

The Importance of Multimodal AI

- The significance of multimodal AI lies in its ability to bridge the gap between human and machine understanding.

- By incorporating diverse sources of information, MMAI can enhance the quality of decision-making, provide context-awareness, and improve human-computer interactions.

- It finds applications in numerous domains, from healthcare and autonomous vehicles to marketing and entertainment.

Historical Development

- The roots of multimodal AI can be traced back to early research in computer vision, natural language processing, and speech recognition.

- Over time, technological advancements, the growth of big data, and the rise of deep learning have accelerated the development of MMAI.

- Notable milestones include the emergence of deep neural networks, the creation of multimodal datasets, and the development of powerful pretrained models.

Key Modalities in Multimodal AI

Text Data

- Text data is a fundamental modality in MMAI.

- It encompasses natural language processing (NLP) and involves tasks like sentiment analysis, text summarization, and machine translation.

- Combining text with other modalities can lead to more comprehensive document understanding and content recommendation systems.

Image Data

- Image data is central to computer vision and object recognition.

- It enables machines to interpret and extract information from visual content, making it indispensable in applications like facial recognition, autonomous vehicles, and medical imaging.

Video Data

- Video data extends beyond static images, enabling the analysis of dynamic scenes.

- Video-based MMAI is crucial in surveillance, action recognition, and social media content analysis.

Audio Data

- Audio data includes speech recognition and sound analysis.

- Multimodal AI can combine audio with other modalities to improve speech-to-text conversion, audio scene analysis, and assistive technologies for individuals with hearing impairments.

Sensor Data

- Sensor data from IoT devices and environmental sensors provides real-time information about the physical world.

- Multimodal AI can fuse sensor data with other modalities to enhance decision-making in smart cities, agriculture, and industrial automation.

Techniques and Approaches in Multimodal AI

Data Fusion Techniques

- Data fusion methods in MMAI include early fusion (merging data at the input level), late fusion (combining outputs from separate models), and hybrid fusion (a combination of both).

- The choice of fusion technique depends on the specific problem and data characteristics.

Deep Learning Models for Multimodal Data

- Deep learning has revolutionized multimodal AI.

- Models like convolutional neural networks (CNNs) for images, recurrent neural networks (RNNs) for text, and transformer-based architectures have paved the way for more effective multimodal data processing.

Transfer Learning and Pretrained Models

- Transfer learning is a key strategy in MMAI.

- Pretrained models, such as BERT for text and ResNet for images, can be fine-tuned for specific multimodal tasks. This approach leverages the knowledge acquired from large datasets and reduces the need for extensive labeled data.

Cross-modal Retrieval

- Cross-modal retrieval is about retrieving information from one modality based on a query from another.

- For example, finding images related to a text query or identifying relevant text for a given image. This is invaluable for content recommendation and search engines.

Applications of Multimodal AI

- Multimodal Search and Recommendation Systems: Multimodal AI enhances search engines and recommendation systems by considering diverse user inputs, leading to more personalized and context-aware recommendations.

- Natural Language Processing (NLP) with Multimodal Data: MMAI improves NLP tasks by incorporating visual or auditory information, enabling better understanding of context and semantics.

- Healthcare and Medical Imaging: In healthcare, multimodal AI aids in disease diagnosis, medical imaging analysis, and patient monitoring through the fusion of text reports, medical images, and sensor data.

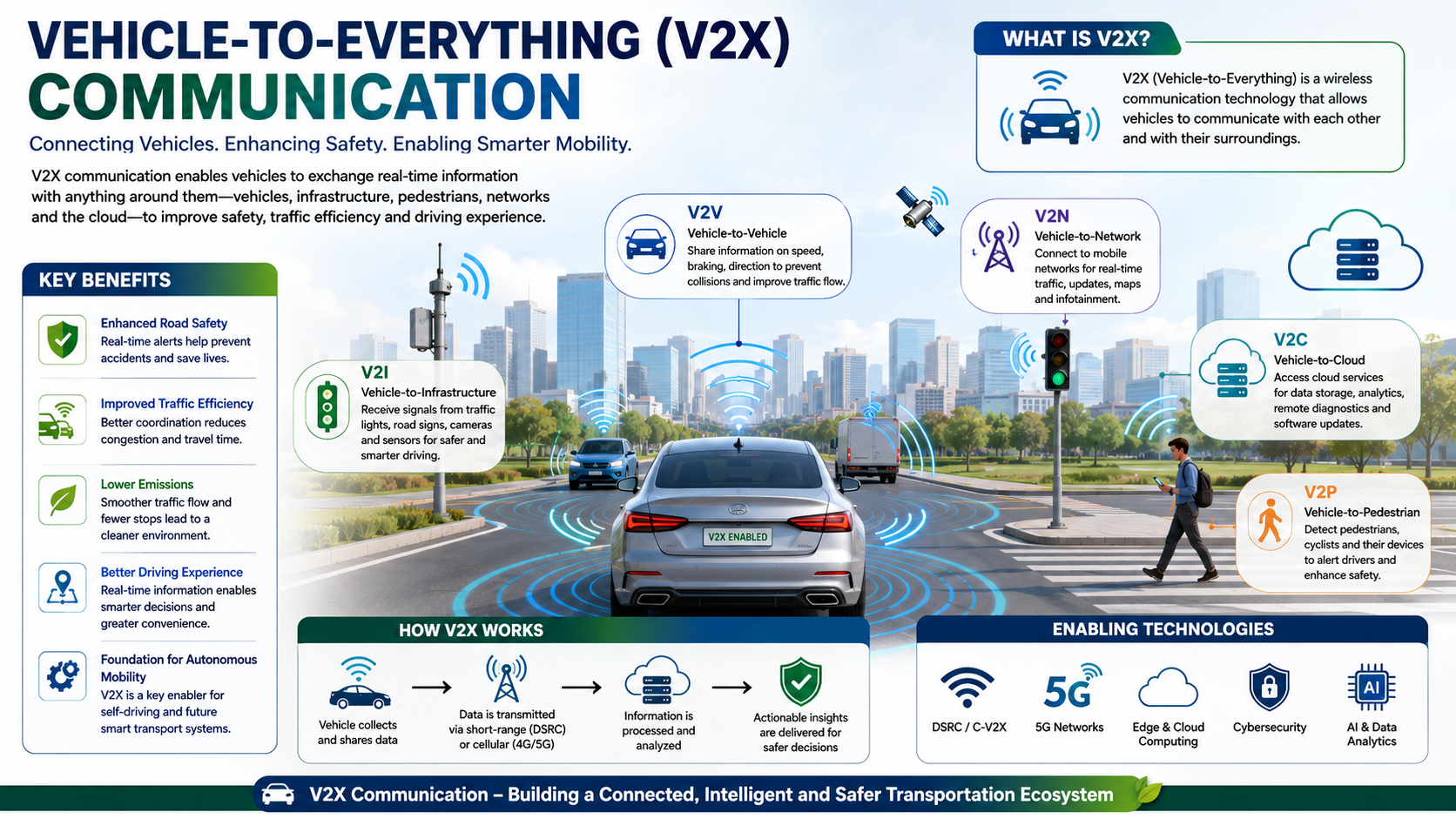

- Autonomous Vehicles: Autonomous vehicles rely on multimodal data for safe navigation, combining image recognition, sensor data, and NLP for communication with passengers.

- Augmented and Virtual Reality: MMAI plays a pivotal role in making AR and VR experiences more immersive and responsive by combining visual, audio, and sensor data.

- Multimedia Content Understanding: For content creators and consumers, multimodal AI improves content categorization, recommendation, and summarization in platforms like social media and video streaming services.

- Human-Robot Interaction: Multimodal AI enhances human-robot interactions by allowing robots to understand and respond to natural language, gestures, and visual cues.

- Assistive Technologies: For individuals with disabilities, multimodal AI enables assistive technologies that aid in communication and accessibility, such as speech recognition for text-to-speech conversion.

Challenges in Multimodal AI

- Data Collection and Annotation: Gathering and annotating multimodal data is labor-intensive and often requires expertise. Data privacy and ethical concerns can also arise.

- Model Training and Computational Resources: The computational demands of multimodal AI can be significant, requiring access to powerful hardware and substantial computational resources.

- Cross-modal Semantic Understanding: Understanding the complex relationships between different modalities and extracting meaningful information from them is a formidable challenge.

- Privacy and Ethical Concerns: The use of multimodal data raises important ethical issues, including privacy, consent, and bias in AI algorithms.

Multimodal AI in Industry

- Multimodal AI in Marketing and Advertising: Businesses leverage MMAI to create highly personalized marketing campaigns and advertisements that consider user behavior, demographics, and sentiment analysis.

- Multimodal AI in Healthcare: In healthcare, MMAI supports disease diagnosis, patient monitoring, and drug discovery by combining various data sources.

- Multimodal AI in E-commerce: E-commerce platforms use multimodal AI for product recommendations, fraud detection, and improving the online shopping experience.

- Multimodal AI in Entertainment and Gaming: The entertainment industry benefits from MMAI through immersive gaming experiences, content recommendation, and the creation of interactive storytelling.

- Multimodal AI in Education: In education, multimodal AI enhances online learning platforms by providing personalized content, speech recognition for language learning, and interactive educational games.

Future Trends and Developments

- Advancements in Multimodal Pretrained Models: Ongoing research is focused on developing specialized pretrained models for multimodal tasks, enhancing model efficiency and performance.

- Interdisciplinary Research and Collaboration: Interdisciplinary collaboration between AI researchers and domain experts will play a vital role in advancing MMAI for real-world applications.

- The Role of Multimodal AI in AI Safety: Multimodal AI contributes to AI safety by improving the interpretability and robustness of AI systems.

- Ethical Considerations and Regulation: The ethical use of multimodal AI will be a growing concern, leading to the development of regulations and guidelines to ensure responsible AI practices.

.jpg)

Conclusion

The future of MMAI holds great promise. With ongoing research and advancements in AI technologies, multimodal AI will continue to shape the way we interact with machines, paving the way for more intelligent, intuitive, and responsible AI systems.

MUST READ ARTICLES:

https://www.iasgyan.in/blogs/basics-of-artificial-intelligence

|

PRACTICE QUESTION

Q. Multimodal Artificial Intelligence (MMAI) is gaining prominence in various domains, from healthcare to marketing. Discuss the significance, challenges, and potential ethical concerns associated with MMAI. Also, elucidate its role in improving decision-making and interactions between humans and machines. (250 Words)

|