Why in the news?

The Gujarat Police have launched NARIT AI (Narcotics Analysis & RAG-based Investigation Tool), becoming the first in India to use AI for investigations and prosecution.

|

Read all about: AI INTEGRATION IN JUDICIARY EXPLAINED l ROLE OF AI IN JUDICIARY EXPLAINED |

NARIT AI (Narcotics Analysis & RAG-based Investigation Tool) is an artificial intelligence system developed by the Gujarat Police to strengthen investigations and prosecutions under the Narcotics Drugs and Psychotropic Substances (NDPS) Act 1985.

How NARIT AI Functions?

RAG Technology: It employs Retrieval Augmented Generation (RAG) to access a secure, closed database instead of the open internet, ensuring legal precision and preventing incorrect information.

Case Analysis: Officers upload First Information Reports (FIRs) for the application to generate structured analysis reports.

Procedural Guidance: It offers step-by-step instructions, evidence checklists, investigator guidelines, and identifies legal flaws in FIRs.

Courtroom Prediction: It predicts defense arguments and suggests rebuttals using Supreme Court and High Court precedents.

Data Training: The AI is specialized in the NDPS Act, BNS (Bharatiya Nyaya Sanhita), BNSS (Bharatiya Nagarik Suraksha Sanhita), and BSA (Bharatiya Sakshya Adhiniyam).

Key Features

Privacy: It is a private system developed exclusively for the Gujarat Police and is not accessible to the public.

Reliability: By relying on verified legal texts and government circulars, it aims to reduce the high rate of acquittals caused by minor procedural lapses.

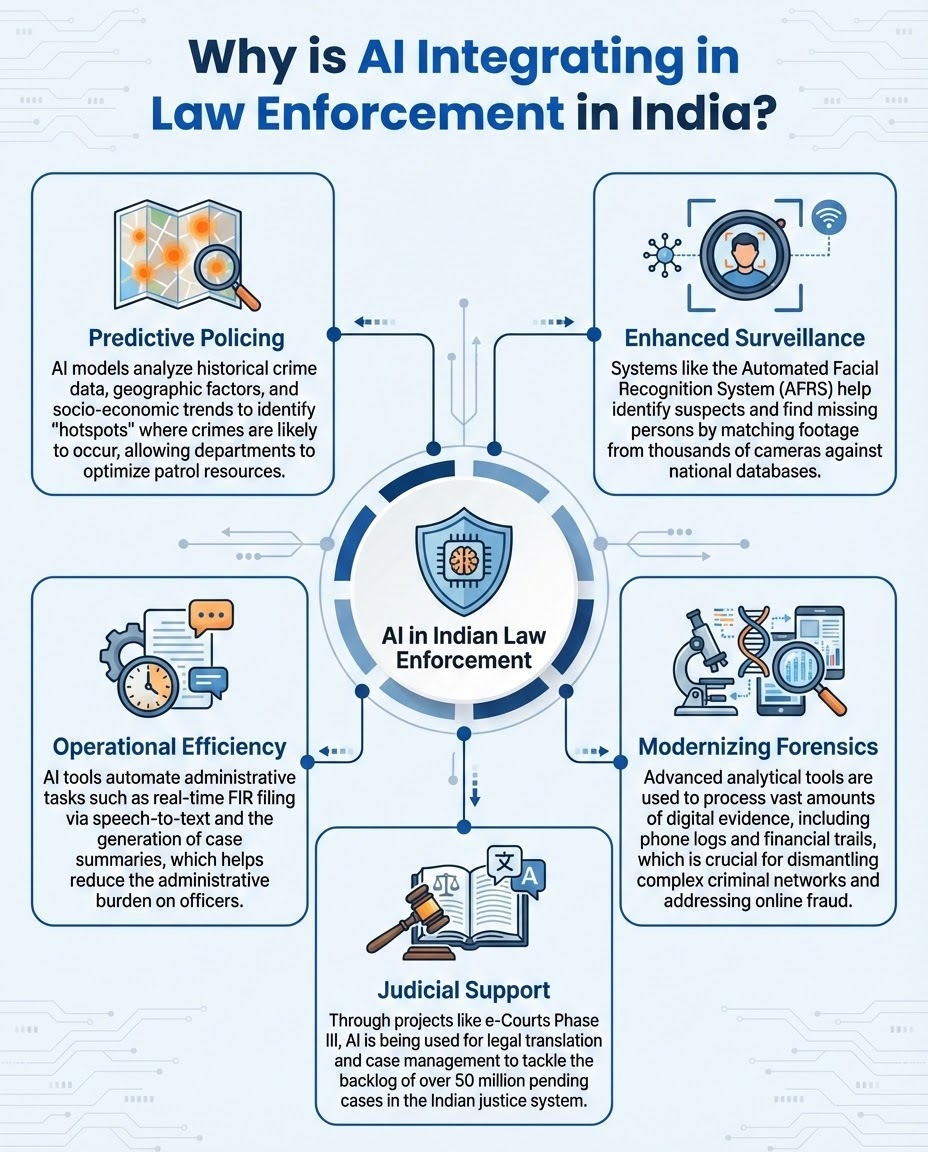

Enhanced Crime Detection and Prevention

Predictive Policing: AI algorithms analyze historical crime data, weather, and socio-economic factors to identify "hotspots," allowing departments to deploy patrols where crimes are most likely to occur.

Real-time Monitoring: AI-powered CCTV can automatically detect unusual activities like overcrowding, loitering, or even fallen persons in public spaces, triggering instant alerts for relief services.

Weapon and Intrusion Detection: Advanced models (like YOLO) can scan video feeds to identify firearms or unauthorized intrusions in high-security zones like prisons, enabling faster interventions before violence unfolds.

Operational Efficiency and Resource Management

Automated Administrative Tasks: Generative AI and speech-to-text tools help in real-time FIR filing and case file summarization, drastically reducing the administrative burden on officers.

Resource Optimization: AI-driven models assist in optimizing patrol routes and staffing based on real-time crime probability, ensuring limited manpower is used where it is needed most.

Accelerated Case Processing: Data analysis that traditionally took days of manual effort—such as verifying documents or cross-checking criminal records—can now be completed in minutes.

Investigative and Forensic Success

Facial Recognition (FRT): AI systems can match faces in crowds against national databases with an error margin below 10%, compared to 35% for traditional methods. (Source: SPAST)

Digital Evidence Analysis: AI can sift through massive volumes of unstructured data—including social media posts, emails, and financial trails—to identify hidden connections in complex cases like cybercrime and money laundering.

Facial Reconstruction: In January 2024, Delhi Police solved a "blind" murder case by using AI to reconstruct a lifelike image of the victim from a decomposed body, leading to his identification and the arrest of suspects.

Improving Public Safety and Justice

Judicial Support: Through projects like SUPACE and e-Courts Phase III, AI assists in smart case scheduling and legal research, which is essential for reducing over 50 million backlog of pending cases in India.

Safety of Officers: Technologies like drones and robotic security devices can inspect dangerous situations, reducing the risk to the lives of police personnel during high-threat operations.

Algorithmic Bias and Discrimination

Historical Data Feedback Loops: AI trained on biased historical data may label over-policed areas as perpetually "high-risk," creating self-fulfilling prophecies for marginalized communities.

Demographic Inaccuracies: Facial Recognition Technology (FRT) is often less accurate for women, ethnic minorities, and those with darker skin tones, increasing wrongful detention risks.

Privacy and Surveillance Concerns

Mass Surveillance: The shift from traditional CCTV to AI-powered FRT enables real-time tracking of individual movements across entire cities, raising concerns about the normalization of a "surveillance state".

Erosion of Fundamental Rights: Continuous monitoring can have a "chilling effect" on the Right to Dissent and freedom of movement, violating the right to privacy affirmed in the Puttaswamy judgment.

Lack of Transparency and Accountability

Black Box Algorithms: Many policing tools are proprietary, meaning neither the public nor the judiciary can see how they reach specific decisions or "risk scores".

Automation Bias: Officers may over-rely on AI outputs, potentially overriding their own professional judgment and leading to accountability gaps when errors occur.

Regulatory and Legal Gaps

Absence of Specific Laws: India currently lacks a dedicated statutory framework governing the deployment, audit, and use of AI in law enforcement.

DPDPA Exemptions: Although the Digital Personal Data Protection (DPDP) Act, 2023 offers safeguards, critics claim its extensive national security exemptions for government agencies permit unregulated state surveillance.

Ethical and Social Impacts

Presumption of Guilt: Some researchers argue that predictive policing flips the constitutional principle of "innocent until proven guilty" by treating public movements as data to be analyzed for criminal anomalies.

Fragmentation of Responsibility: Determining who is at fault for an AI-led wrongful arrest—the developer, the data provider, or the officer—remains a complex legal challenge.

Robust Legal and Regulatory Frameworks

Statutory Frameworks: AI policing requires dedicated legislation to protect the Right to Privacy and define permissible uses.

High-Risk Restrictions: High-risk applications, like real-time facial recognition at protests, should be banned or strictly limited unless immediate national security threats arise.

Algorithmic Transparency and Auditing

Third-Party Bias Audits: AI models require frequent, independent auditing to mitigate biases based on gender, caste, or religion.

Explainability (XAI): AI systems must be interpretable, allowing the legal community to understand the reasoning behind generated risk scores or matches.

Human-in-the-Loop (HITL) Protocols

Human Oversight: Officers must verify AI outputs before issuing warrants or arrests to prevent automation bias.

Ongoing Training: Continuous training for law enforcement is essential to understand AI limitations and manage ethical risks.

Data Privacy and Security

Data Minimization: Agencies must only collect essential data for specific investigations, deleting it once legal objectives are met.

Secure Infrastructure: Utilize advanced encryption and access controls to protect sensitive databases from unauthorized leaks or hacking.

Accountability and Redressal

Oversight Mechanisms: Create independent bodies to investigate AI misuse or algorithmic errors.

Transparency: Maintain public registries of AI tools used by police to build community trust.

Phased Roadmap

According to the India AI Governance Guidelines (February 2026), the government is following a three-stage action plan:

Legal and Regulatory Evolution

Graded Liability: Authorities advise proportional accountability based on the AI's role and the specific risk level of the policing task.

Regulatory Updates: Current laws (IT Act, BNS) require review to define liability and data standards for AI evidence and predictive models.

Sandboxes: Controlled "sandboxes" should be used to test high-risk tools like facial recognition prior to broad implementation.

Institutional Capacity and Skilling

Professional Training: The Bureau of Police Research and Development (BPR&D) should create modules to educate officers on AI limitations and avoiding "automation bias."

Public Literacy: Improving AI awareness for the public and officials is vital to ensure technology serves "social good" and fosters trust rather than just enabling surveillance.

Technological Safeguards

Techno-Legal Solutions: Experts recommend integrating "privacy-by-design" and "fairness-by-design" through automated bias checks and audit logs.

Human-Centric Approach: The "People First" strategy ensures AI remains an augmentative tool, requiring mandatory human-in-the-loop for decisions affecting citizen rights. Conclusion

Conclusion

AI is the future of Indian policing as a "force multiplier" and part of the SMART vision, but its success depends on balancing proactive data-driven prevention with robust legal guardrails and a human-centered strategy.

Source: THE HINDU

|

PRACTICE QUESTION Q. "The integration of Artificial Intelligence in law enforcement is a double-edged sword." Discuss. 150 words |

NARIT AI (Narcotics Analysis & RAG-based Investigation Tool) is an advanced artificial intelligence system launched by the Gujarat Police. It actively assists investigating officers in handling complex drug crimes under the NDPS Act, 1985, by analyzing FIRs, flagging legal weaknesses, and providing step-by-step procedural checklists.

India is strategically vulnerable to drug trafficking because it is wedged directly between the world's two largest illicit drug-producing hubs: the Golden Crescent (Afghanistan, Iran, Pakistan) to the west and the Golden Triangle (Myanmar, Thailand, Laos) to the east.

Key challenges include "algorithmic bias" (where the AI replicates historical policing biases against vulnerable communities), a "digital capacity deficit" among ground-level officers who lack digital literacy, and "automation bias" (where officers might blindly trust the AI's suggestions and ignore their own human intuition).

© 2026 iasgyan. All right reserved